Paper: Chubby

Chubby / Distributed Locking Service

Goal

- Design a highly available and consistent service that can store small objects and provide a locking mechanism on those objects.

What is Chubby?

- Chubby is a service that provides a distributed locking mechanism and also stores small files.

- Internally, it is implemented as a key/value store that also provides a locking mechanism on each object stored in it.

- Extensively used in various systems inside Google to provide storage and coordination services for systems like GFS and BigTable.

- Apache ZooKeeper is the open-source alternative to Chubby.

- Chubby is a centralized service offering developer-friendly interfaces (to acquire/release locks and create/read/delete small files).

- It does all this with just a few extra lines of code to any existing application without a lot of modification to application logic.

- At a high level, Chubby provides a framework for distributed consensus.

Chubby Use Cases

- Primarily Chubby was developed to provide a reliable locking service. Other use cases evolved like:

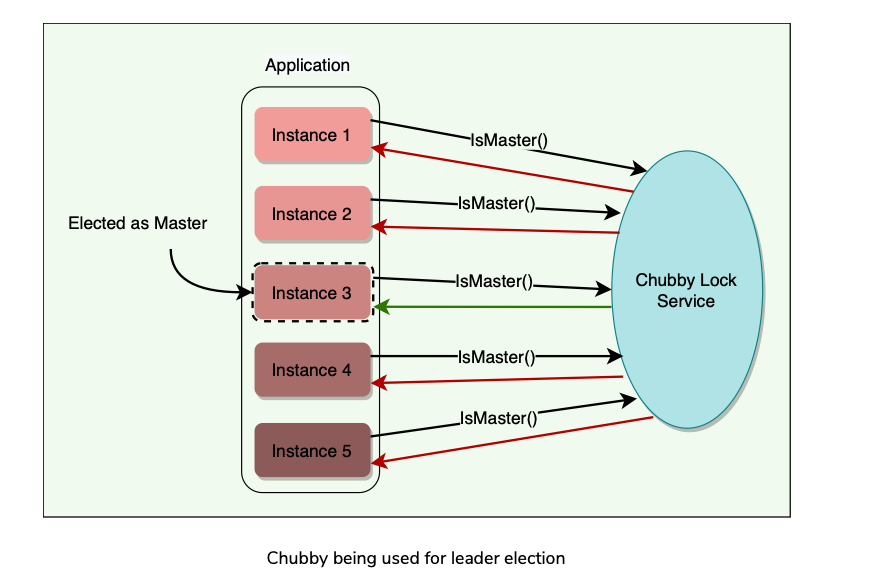

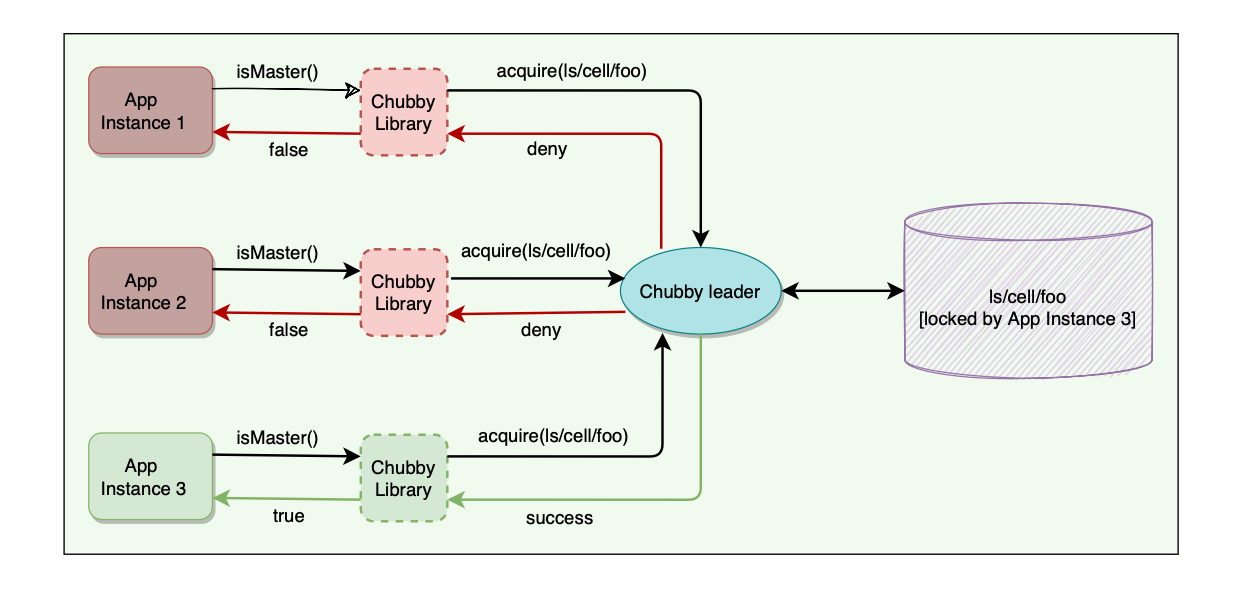

Leader Election

- Any lock service can be seen as a consensus service, as it converts the problem of reaching consensus to handing out locks.

- A set of distributed applications compete to acquire a lock, and whoever gets the lock first gets the resource.

- Similarly, an application can have multiple replicas running and wants one of them to be chosen as the leader. Chubby can be used for leader election among a set of replicas.

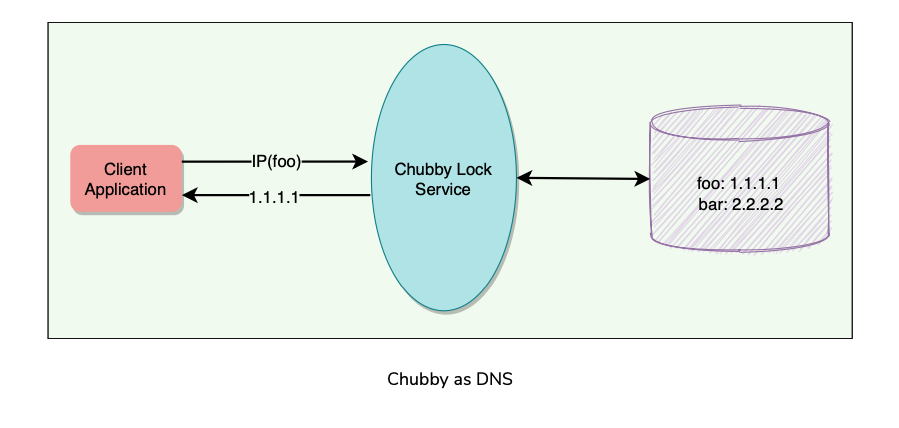

Naming Service(Like DNS)

- It is hard to make faster updates to DNS due to its time-based caching nature, which means there is generally a potential delay before the latest DNS mapping is effective.

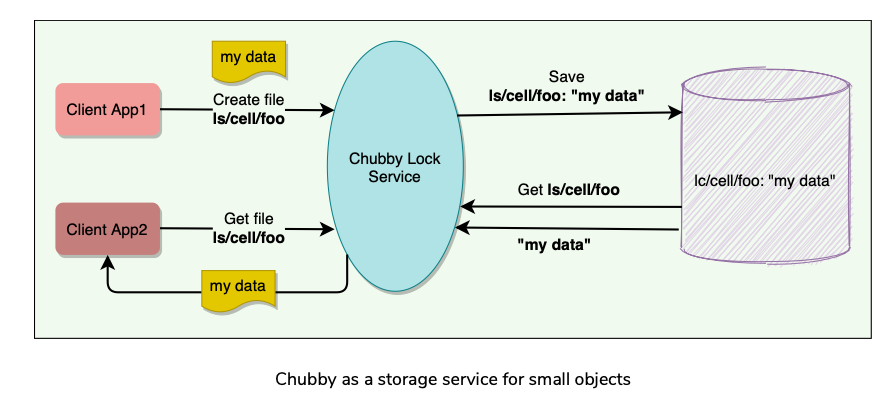

Storage(Small Objects that rarely change)

- Chubby provides a Unix-style interface to reliably store small files that do not change frequently (complementing the service offered by GFS).

- Applications can then use these files for any usage like DNS, configs, etc.

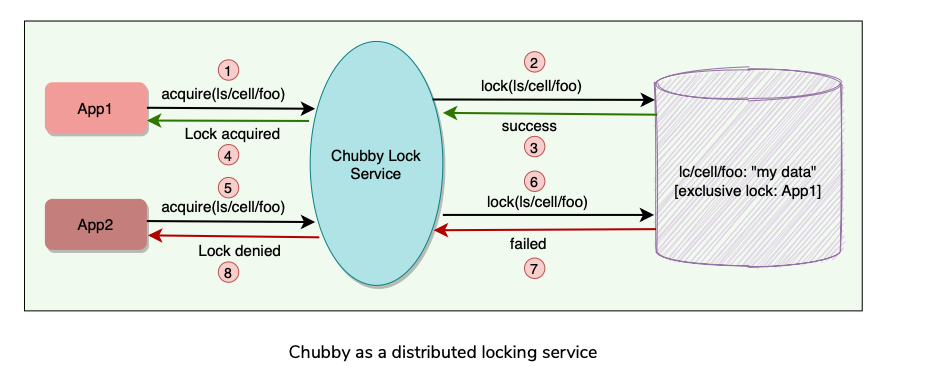

Distributed Locking Mechanism

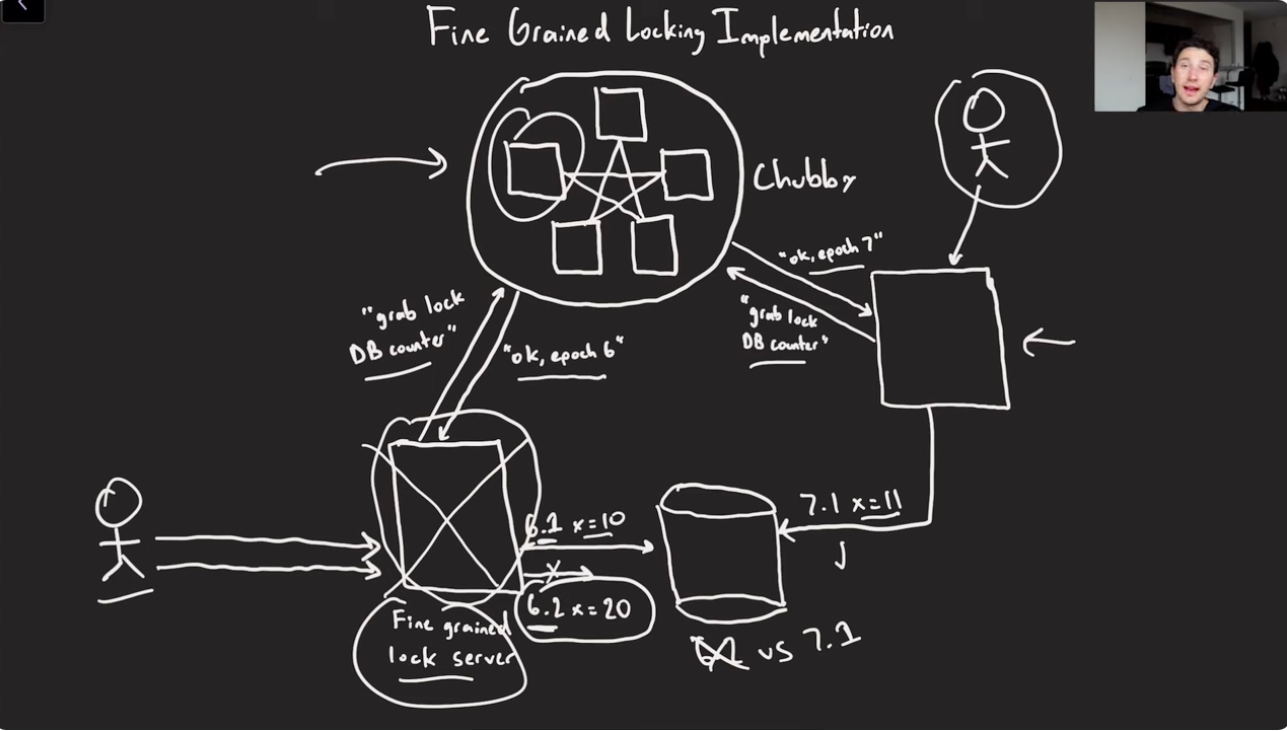

- Chubby provides a developer-friendly interface for coarse-grained distributed locks (as opposed to fine-grained locks) to synchronize distributed activities in a distributed environment.

- Application needs a few lines, and chubby can take care of all lock management so that devs can focus on business logic, and not solve distributed Locking problems in a Distributed system’s setting.

- We can say that Chubby provides mechanisms like semaphores and mutexes for a distributed environment.

When Not to Use Chubby?

- Bulk Storage is needed

- Data update rate is high.

- Locks are acquired/released frequently.

- Usage is more like a publish/subscribe model.

Background

- Chubby is neither really a research effort nor does it claim to introduce any new algorithms.

- Rather, Chubby describes a certain design and implementation done at Google in order to provide a way for its clients to synchronize their activities and agree(Consensus) on basic information about their environment

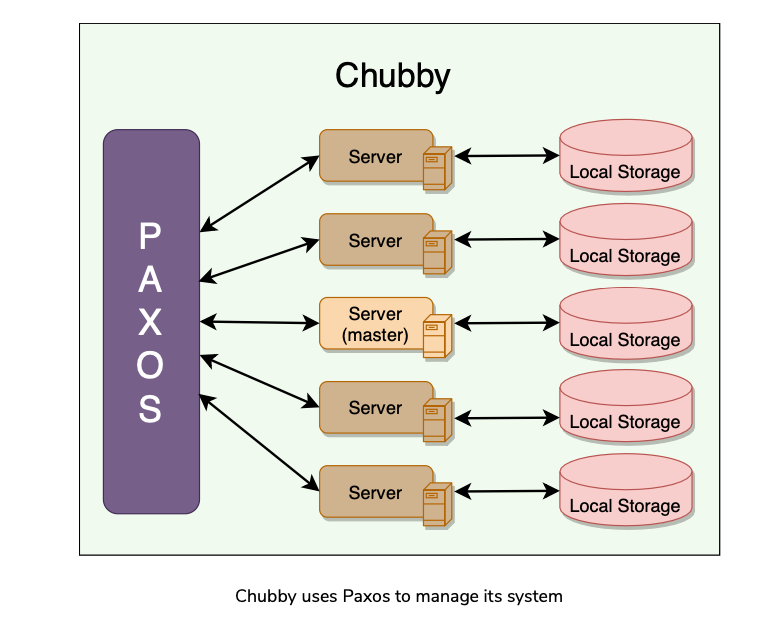

Chubby and Paxos

- Chubby uses Paxos underneath to manage the state of the Chubby system at any point in time.

- Getting all nodes in a distributed system to agree on anything (e.g., election of primary among peers) is basically a kind of distributed consensus problem.

- Distributed consensus using Asynchronous Communication is already solved by Paxos protocol.

Chubby Common Terms

Chubby Cell

- Chubby cell is a Chubby Cluster. Most Chubby Cells are single Data Center(DC) but there can be some configuration where Chubby replicas exist Cross DC as well.

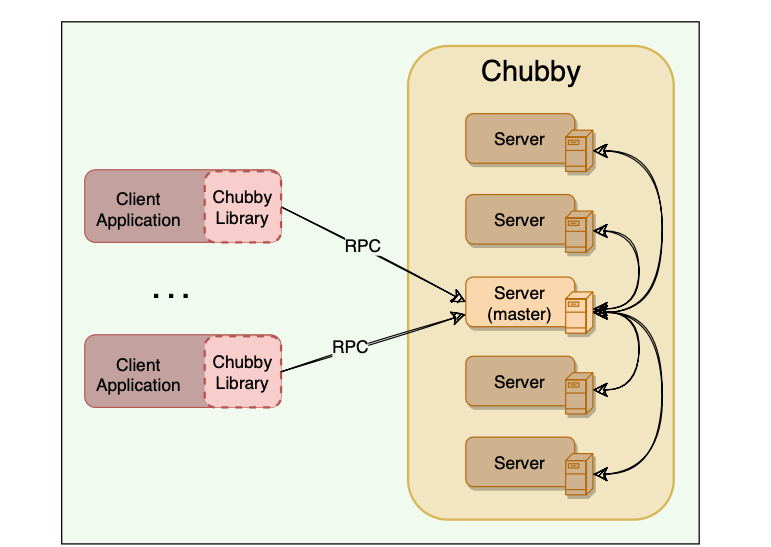

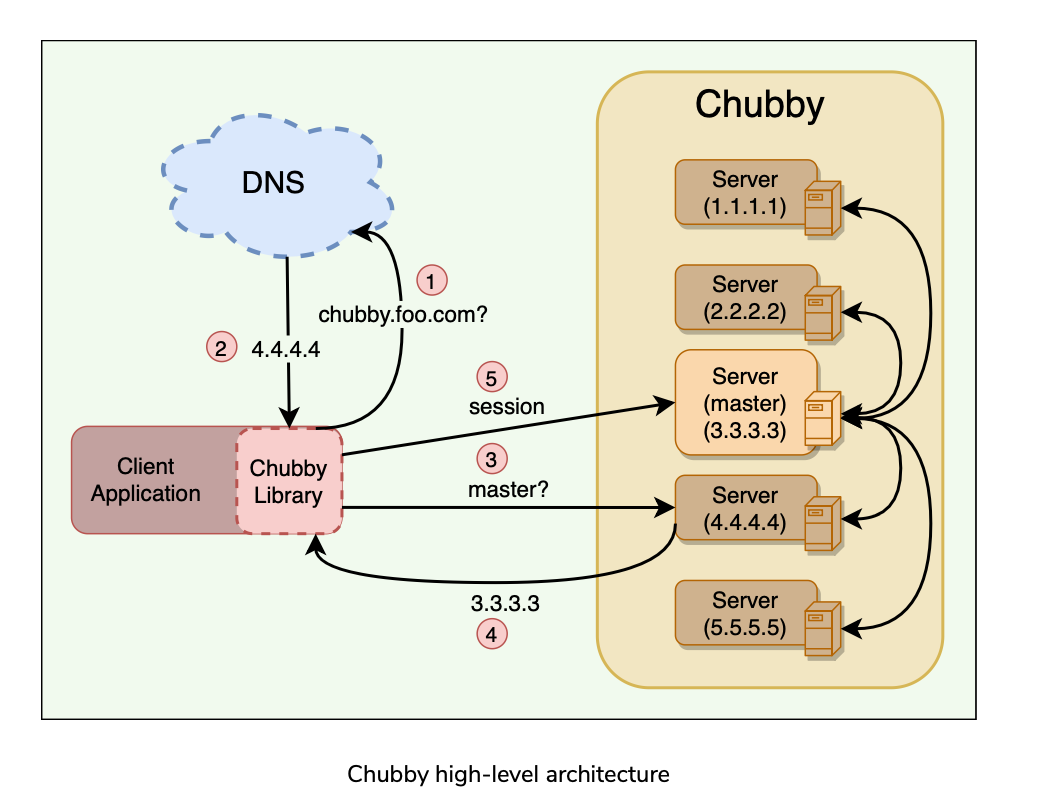

- Chubby cell has two main components, server and client, that communicate via remote procedure call (RPC).

Chubby Servers

- A Chubby Cell consists of a small set of servers(typically 5) known as Replicas.

- Using Paxos, one of the servers is selected as Master which handles all client requests. Fails over to another replica if the master fails.

- Each replica maintains a small database to store files/directories/locks.

- The master writes directly to its own local database, which gets synced asynchronously to all the replicas(Reliability).

- For Fault Tolerance, replicas are placed on different racks.

Chubby Client Library

- Client applications use a Chubby library to communicate with the replicas in the chubby cell using RPC.

Chubby API

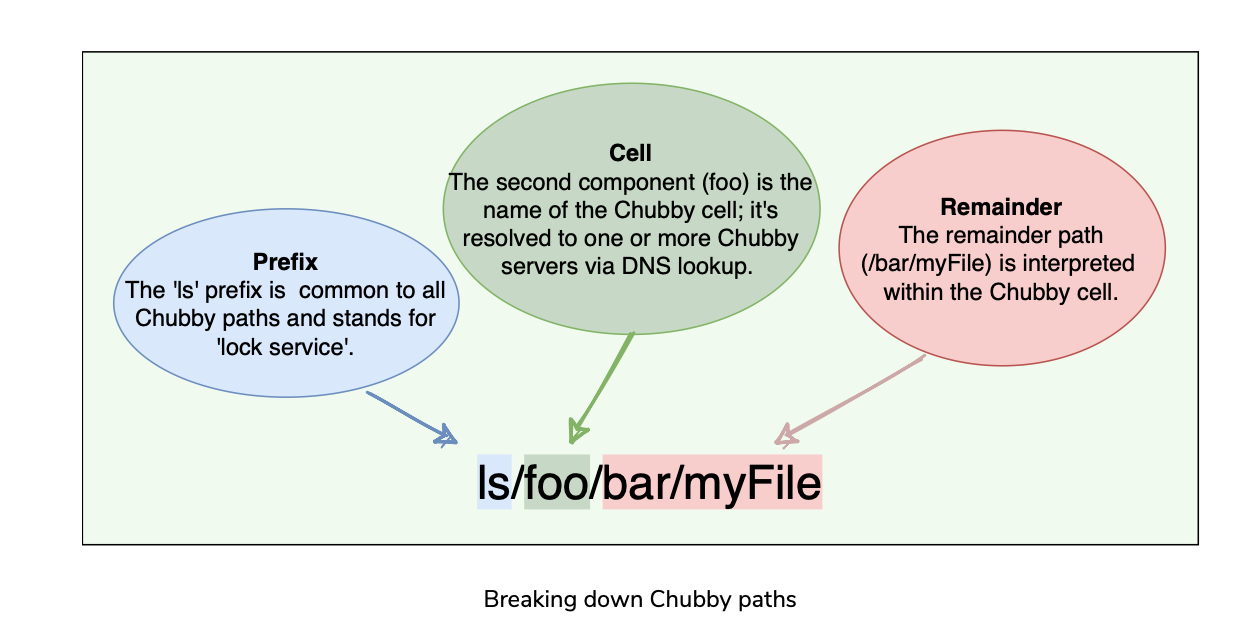

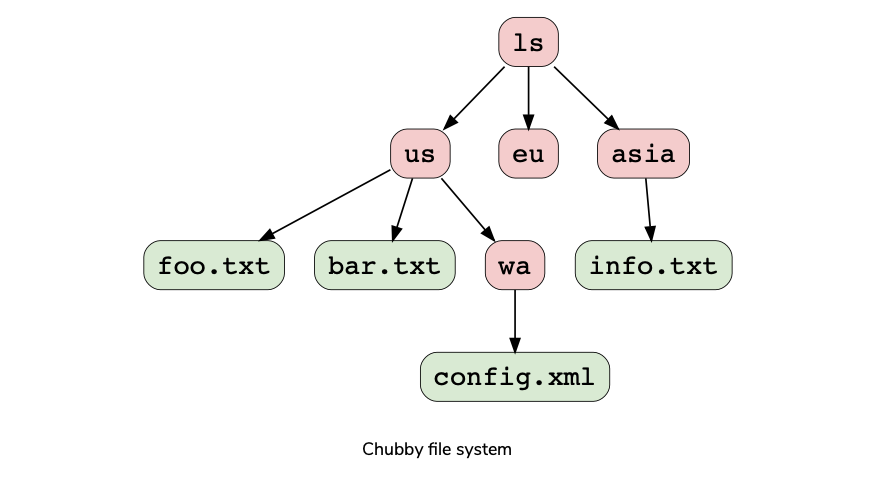

- Chubby exports a unix-like file system interface similar to POSIX but simpler.

- It consists of a strict tree of files and directories with name components separated by slashes. E.g. File format: /ls/chubby_cell/directory_name/…/file_name

- A special name, /ls/local, will be resolved to the most local cell relative to the calling application or service. What is the most local Cell?

- Chubby can be used for locking or storing a small amount of data or both, i.e., storing small files with locks.

- API Categories

General

- Open() : Opens a given named file or directory and returns a handle.

- Close() : Closes an open handle.

- Poison() : Allows a client to cancel all Chubby calls made by other threads without fear of deallocating the memory being accessed by them.

- Delete() : Deletes the file or directory.

File

- GetContentsAndStat() : Returns (atomically) the whole file contents and metadata associated with the file. This approach of reading the whole file is designed to discourage the creation of large files, as it is not the intended use of Chubby.

- GetStat() : Returns just the metadata.

- ReadDir() : Returns the contents of a directory – that is, names and metadata of all children.

- SetContents() : Writes the whole contents of a file (atomically).

- SetACL() : Writes new access control list information.

Locking

- Acquire() : Acquires a lock on a file.

- TryAcquire() : Tries to acquire a lock on a file; it is a non-blocking variant of Acquire.

- Release() : Releases a lock.

Sequencer

- GetSequencer() : Get the sequencer of a lock. A sequencer is a string representation of a lock.

- SetSequencer() : Associate a sequencer with a handle.

- CheckSequencer() : Check whether a sequencer is valid. Chubby does not support operations like append, seek, move files between directories, or making symbolic or hard links.

Files can only be completely read or completely written/overwritten. This makes it practical only for storing very small files.

Design Rationale

Agenda

- Why was chubby built as a service?

- Why coarse-grained locks?

- Why advisory locks?

- Why does Chubby need storage?

- Why does Chubby exports like a unix-like file system interface?

- High Availability and reliability

Why was chubby built as a service rather than a distributed client library doing Paxos?

- Reasons behind building a distributed service instead of having a client library that only provides Paxos distributed consensus? A lock service has clear advantages over a client library:

Why coarse-grained locks?

Chubby locks usage is not expected to be fine-grained in which they might be held for only a short period (i.e., seconds or less). For example, electing a leader is not a frequent event. Reasons why only coarse grained locks ar supported:

- Less load on the lock server

- Survive Lock server failures

- Fewer lock servers are needed:

- Implement a fine-grained locking system on top of this coarse grained locking system Chubby.

Why advisory locks?

- Chubby locks are advisory, which means it is up to the application to honor the lock. Chubby doesn’t make locked objects inaccessible to clients not holding their locks.

- Chubby gave following reasons for not having mandatory locks:

Why does Chubby need storage?

- To provide a Consistent view of the system to various distributed entities in some use cases like:

Why does Chubby exports like a unix-like file system interface?

- It significantly reduces the effort needed to write basic browsing and namespace manipulation tools, and reduces the need to educate casual Chubby users.

High Availability and reliability

- Chubby compromises on performance in favor of availability and consistency. What?

How Chubby Works?

Agenda

- Service Initialization

- Client Initialization

- Leader Election example using Chubby

Service Initialization

- A master is chosen among chubby replicas using Paxos.

- Current master information is persisted in storage and all replicas become aware of the master.

Client Initialization

- Client contacts DNS to know listed Chubby replicas.

- Client calls Chubby Server directly via Remote Procedure Call(RPC)

- If that replica is not the master, it will return the address of the current master.

- Once the master is located, the client maintains a session with it and sends all requests to it until it indicates that it is not the master anymore or stops responding.

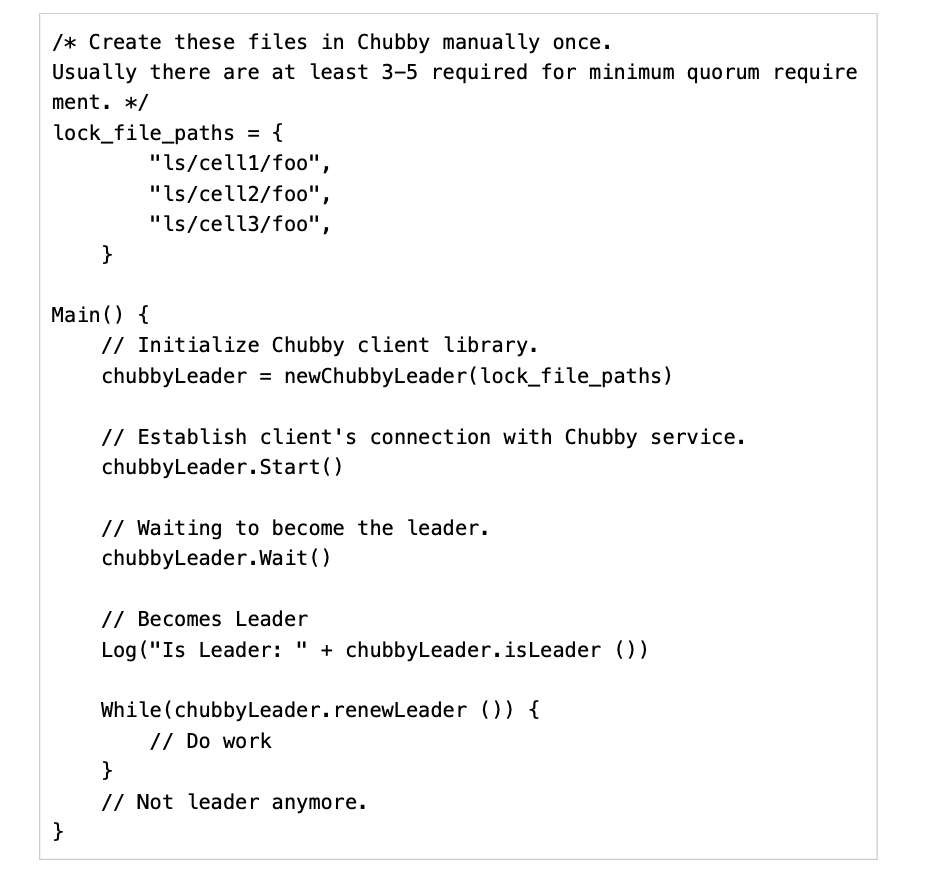

Leader Election example using Chubby

Example of application that uses Chubby to elect a single master from a bunch of instances of the same application.

Sample Pseudocode for leader election from client application.

Files, Directories and Handles

Agenda

- Nodes

- Metadata

- Handles Chubby file system interface is a tree of files and directories(which can have sub-directories but not files), each of which is called a node.

Nodes

- Any node can act as an advisory reader/writer lock.

- Nodes can be ephemeral or permanent.

- Ephemeral files are used as temporary files and act as an indicator to others that a client is alive.

- Ephemeral files are also deleted if no client has them open.

- Ephemeral directories are also deleted if they are empty.

- Any node can be explicitly deleted.

Metadata

- Metadata for each node includes ACL(Access control list), 4 monotonically increasing 64-bit numbers, and a checksum.

- ACL

- Monotonically increasing 64-bit numbers: These numbers allow clients to detect changes easily.

- Checksum : Chubby exposes a 64-bit file-content checksum so clients may tell whether files differ.

Handles

- Clients open nodes to obtain handles(similar to Unix File Descriptors). Handles include:

Locks Sequencers and Lock-Delays

Agenda

- Locks

- Sequencer

- Lock-Delay

Locks

- Each chubby node can act as a reader-writer lock in the following two ways:

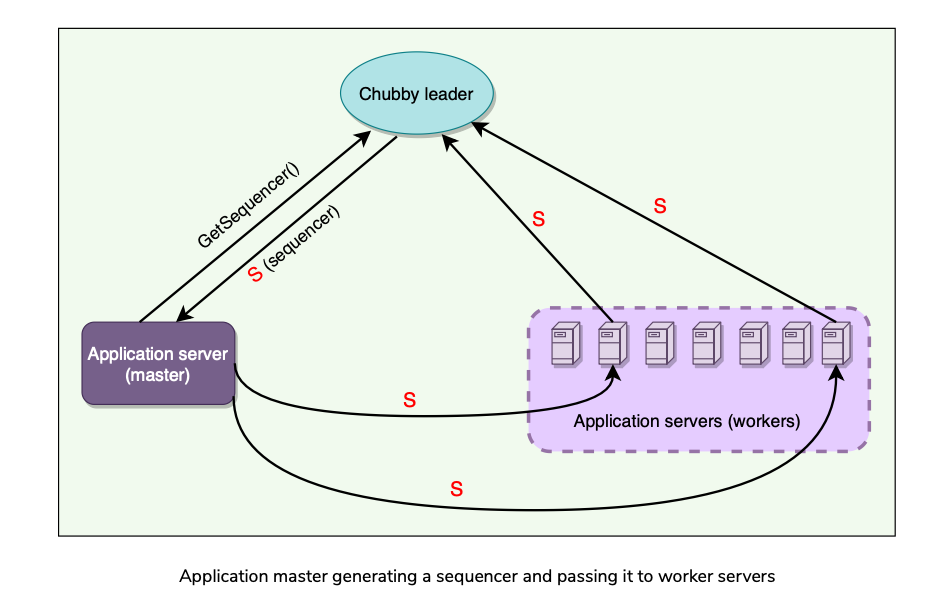

Sequencer

- With distributed systems, receiving messages out of order is a problem.

- Chubby uses sequence numbers to solve this problem.

- So below what we are basically trying to do is, trying to do distributed consensus(total order broadcast) on a bunch of application servers(using Leader election on those servers) by using a Distributed Lock Service(Chubby) which uses Paxos to help provide distributed consensus within application servers.

- After acquiring a lock on a file, a client can immediately request a Sequencer, which is an opaque byte string describing the state of the lock.

- An application’s master server can generate a sequencer and send it with any internal order to other application servers.

- Application servers that receive orders from a primary can check with Chubby if the sequencer is still good and does not belong to a stale primary (to handle the ‘Brain split’ scenario).

Lock-Delay

- For file servers(or external services) that do not support sequencers**(or Fencing Tokens** to protect against delayed packets belonging to an older lock**)**, Chubby provides a lock-delay period to protect against message delays and server restarts.

- If a client releases a lock in the normal way, it is immediately available for other clients to claim, as one would expect.

- However, if a lock becomes free because the holder has failed or become inaccessible, the lock server will prevent other clients from claiming the lock for a period called the lock- delay.

- While imperfect, the lock-delay protects unmodified servers and clients from everyday problems caused by message delays and restarts.

Session and Events

Agenda

- What is a Chubby Session?

- Session Protocol

- What is Keep Alive

- Session Optimization

- Failovers

What is a Chubby Session?

- A relationship b/w Chubby Cell and a Client.

- It exists for some interval of time and is maintained by periodic handshakes called keepalives.

- Clients’ handles, locks, and cached data only remain valid provided its session remains valid.

Session Protocol

- Client requests a new session from Chubby cells’s master.

- Session ends if the client explicitly ends it or it has been idle.

- Each session has an associated lease, which is the time interval during which the master guarantees not to terminate the session unilaterally. End of this interval is called Session Lease Timeout.

- Master advances session lease timeout in 3 circumstances:

What is Keep Alive

- Keepalive is a way for a client to maintain a constant session with Chubby Cell.

- Steps:

- Google experimentation showed that 93% of RPC requests are KeepAlives.

- How can we reduce the keepalives?

Session Optimization

- Piggybacking events(using a different event to transmit some additional detail)

- Local Lease

- Jeopardy

- Grace Period

- Original(Initial chubby session):

- Optimization Attempt 1:

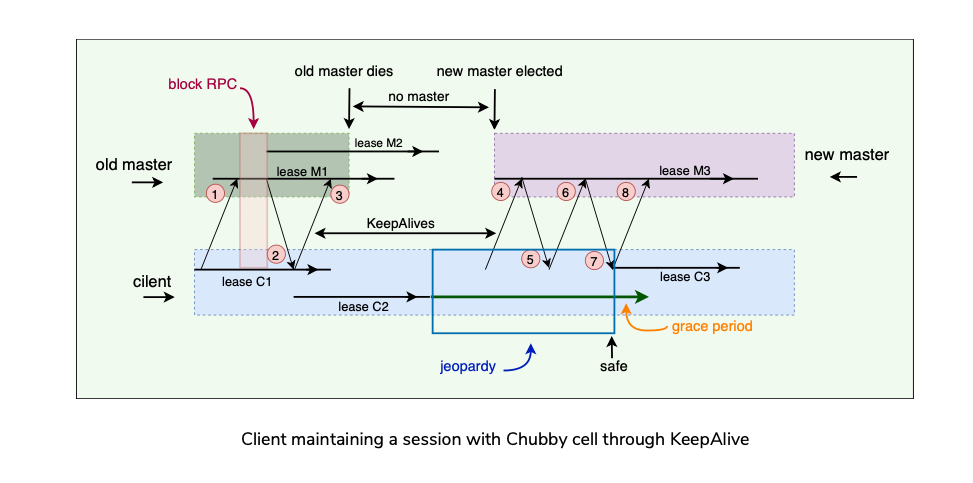

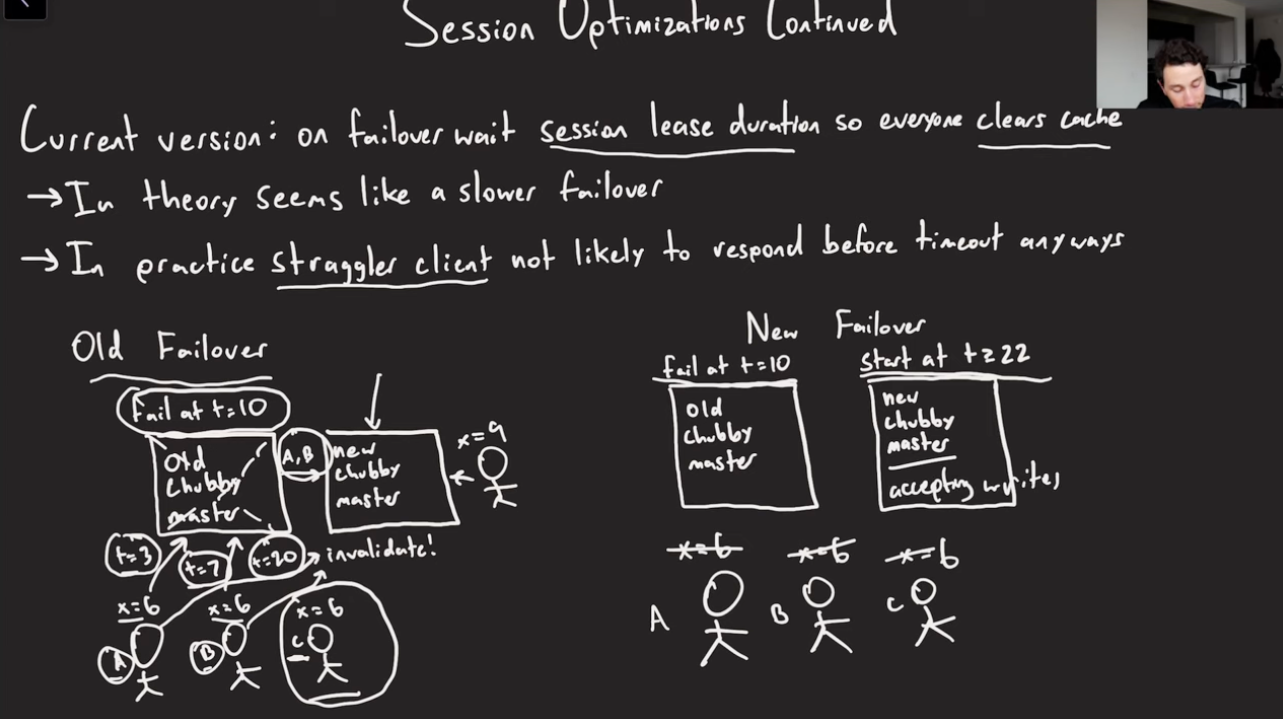

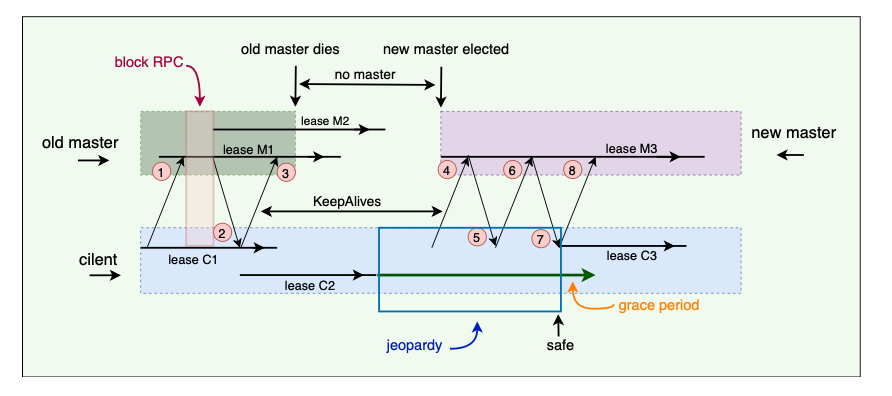

Failovers

Failover happens when the master fails or otherwise loses membership. Chubby typically takes b/w 5-30 seconds for fail-over.

Summary of things that happen in a master failover.

Client has lease M1 (& local lease C1) with master and pending KeepAlive request.

Master starts lease M2 and replies to the KeepAlive request.

Client extends the local lease to C2 and makes a new KeepAlive call. Master dies before replying to the next KeepAlive. So, no new leases can be assigned. Client’s C2 lease expires, and the client library flushes its cache and informs the application that it has entered jeopardy. The grace period starts on the client.

Eventually, a new master is elected and initially uses a conservative approximation M3 of the session lease that its predecessor may have had for the client. Client sends KeepAlive to new master (4).

The first KeepAlive request from the client to the new master is rejected (5) because it has the wrong master epoch number (described in the next section).

Client retries with another KeepAlive request.

Re-tried KeepAlive succeeds. Client extends its lease to C3 and optionally informs the application that its session is no longer in jeopardy (session is in the safe mode now).

Client makes a new KeepAlive call, and the normal protocol works from this point onwards.

Because the grace period was long enough to cover the interval between the end of lease C2 and the beginning of lease C3, the client saw nothing but a delay. If the grace period was less than that interval, the client would have abandoned the session and reported the failure to the application.

Master Election and Chubby Events?

Initializing a newly elected Master

- A newly elected master proceeds as follows:

- Picks a new Epoch Number: To differentiate itself from the previous master. Clients are required to present an epoch number on every call. Master rejects calls from clients using older epoch numbers. This ensures that the new master will not respond to a very old packet that was sent to the previous master.

- Responds to master-location requests: but doesn’t respond to session related operations yet.

- Build in-memory data structures:

- Let clients perform keep-alives:

- Emits a fail-over event to each session:

- Wait: Master waits until each session acknowledges the fail-over event or lets its session expire.

- Allow all operations to proceed.

- Honor older handles by clients:

- Delete Ephemeral files:

Chubby Events

- Chubby supports a simple event mechanism to let its clients subscribe to events.

- Events are delivered asynchronously via callbacks from the chubby library.

- Clients subscribe to a range of events while creating a handle.

- Example of events from Server to Chubby Client:

- Additionally Chubby client sends the following session events to the application:

Caching

Chubby Cache

- Caching is important since it is used for read heavy purposes rather than write heavy.

- Chubby clients cache file contents, node metadata, and information on open handles in a consistent, write-through cache in clients’ memory.

- Chubby must maintain consistency b/w file, its replicas, and cache as well.

- Clients maintain their cache by a lease mechanism, and flush the cache when the lease expires.

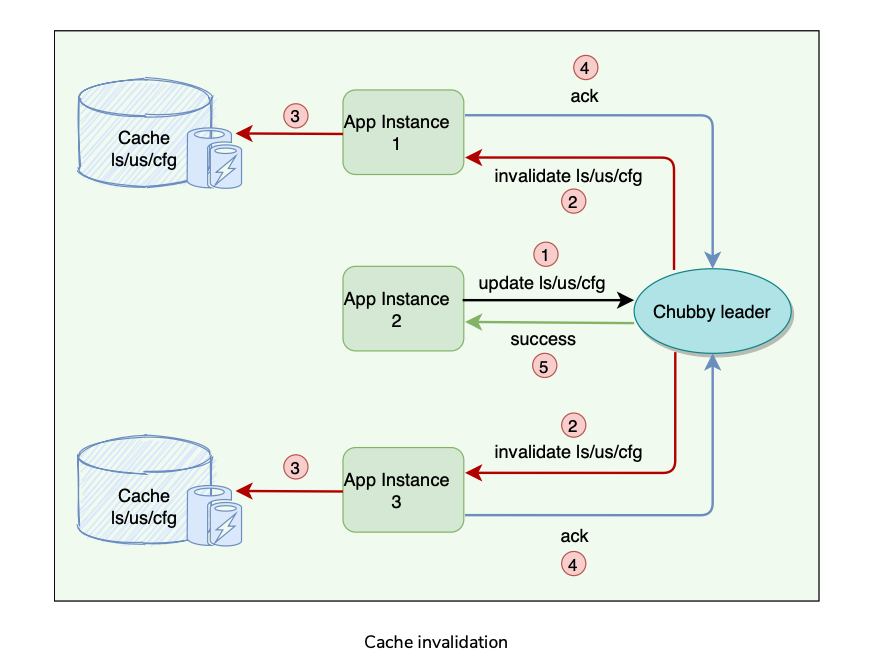

Cache Invalidation

- Protocol for cache invalidation when file data or metadata is changed:

Question: While the master is waiting for acknowledgments, are other clients allowed to read the file?

Answer: During the time the master is waiting for the acknowledgments from clients, the file is treated as ‘uncachable.’ This means that the clients can still read the file but will not cache it. This approach ensures that reads always get processed without any delay. This is useful because reads outnumber writes. Question: Are clients allowed to cache locks? If yes, how is it used?

Answer: Chubby allows its clients to cache locks, which means the client can hold locks longer than necessary, hoping that they can be used again by the same client. Question: Are clients allowed to cache open handles?

Answer: Chubby allows its clients to cache open handles. This way, if a client tries to open a file it has opened previously, only the first open() call goes to the master.

Database

Agenda

Backup

Mirroring How chubby uses a database for storage.

Initially, Chubby used a replicated version of Berkeley DB to store its data. Later, the Chubby team felt that using Berkeley DB exposes Chubby to more risks, so they decided to write a simplified custom database with the following characteristics:

Backup

- For recovery in case of failure, all database transactions are stored in a transaction log (a write-ahead log).

- As this transaction log can become very large over time, every few hours, the master of each Chubby cell writes a snapshot of its database to a GFS server in a different building.

- The use of a separate building ensures both that the backup will survive building damage, and that the backups introduce no cyclic dependencies in the system;

- Once a snapshot is taken, the previous transaction log is deleted. Therefore, at any time, the complete state of the system is determined by the last snapshot together with the set of transactions from the transaction log.

- Backup databases are used for disaster recovery and to initialize the database of a newly replaced replica without placing a load on other replicas.

Mirroring

- Mirroring is a technique that allows a system to automatically maintain multiple copies. Chubby allows a collection of files to be mirrored from one cell to another.

- Mirroring is fast because the files are small.

- A special “global” cell subtree /ls/global/master that is mirrored to the subtree /ls/cell/replica in every other Chubby cell.

- Various files in which Chubby cells and other systems advertise their presence to monitoring services.

- Pointers to allow clients to locate large data sets such as Bigtable cells, and many configuration files for other systems.

Scaling Chubby

Agenda

- Proxies

- Partitioning

- Learning Chubby’s clients are individual processes, so Chubby handles more clients than expected. At Google, 90,000+ clients communicate with a single Chubby server.

Techniques used to reduce communication with the master(since read heavy):

- Minimize request rate by creating more chubby cells so that clients almost always use a nearby cell(found with DNS) to avoid reliance on remote machines.

- Minimize KeepAlives Load: KeepAlives are by far the dominant types of request.

- Caching: Clients cache file data, metadata, handles, locks etc.

- Simplified protocol conversions:

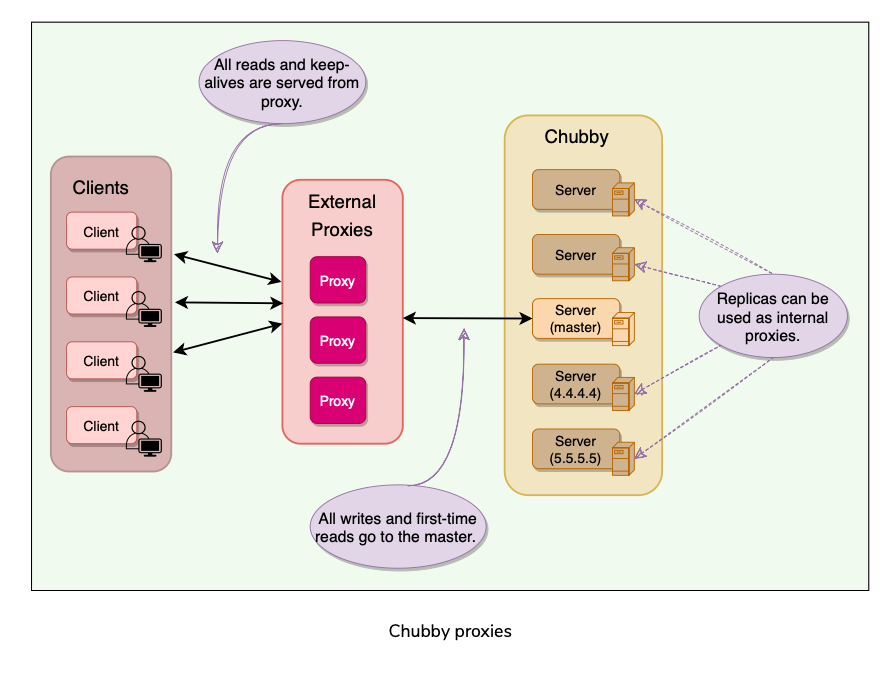

Proxies

- A proxy is an additional server that can act on behalf of the actual server.

- A Chubby proxy can handle KeepAlives and read requests.

- All writes and first-time reads pass through the cache to reach the master

- Proxy responsible for invalidating client’s cache as well.

Partitioning

- Need to support 100K clients. How would chubby do that?

- Chubby’s interface (files & directories) was designed such that namespaces can easily be partitioned between multiple Chubby cells if needed.

- Chubby can partition nodes within a large directory(with lots of sub-directories).

- Scenarios in which partitioning does not help scale:

Learning

- Lack of aggressive caching: Initially, clients were not caching the absence of files or open file handles. An abusive client could write loops that retry indefinitely when a file is not present or poll a file by opening it and closing it repeatedly when one might expect they would open the file just once. Chubby educated its users to make use of aggressive caching for such scenarios.

- Lack of quotas: Chubby was never intended to be used as a storage system for large amounts of data, so it has no storage quotas. In hindsight, this was naive. To handle this, Chubby later introduced a limit on file size (256kBytes).

- Publish/subscribe: There have been several attempts to use Chubby’s event mechanism as a publish/subscribe system. Chubby is a strongly consistent system, and the way it maintains a consistent cache makes it a slow and inefficient choice for publish/subscribe. Chubby developers caught and stopped such uses early on.

- Developers rarely consider availability: Developers generally fail to think about failure probabilities and wrongly assume that Chubby will always be available. Chubby educated its clients to plan for short Chubby outages so that it has little or no effect on their applications.

Chubby as a Name Service?

Authors were surprised to find that Chubby was most popular for DNS.

Hard to pick a good value for TTL, since DNS uses TTL and may serve stale values for some time(up-to 60 secs).

Chubby however, via Client side Cache invalidation, provides Consistent Reads.

E.g. If starting a n processes where each process looks each other up(via DNS), that’s N^2 DNS lookups.

Chubby sees a thundering herd from the reads at client startup(not cached). Summary:

Distributed Lock Service used inside Google.

Provides coarse-grained locking(for minutes, hours or days) and not recommended for fine-grained locking(seconds or less). Suited to read-heavy rather than write-heavy. Although you can build a fine-grained locking system on top of Chubby.

A Chubby cell is a Chubby Cluster(usually with 3 or 5 replicas).

Using Paxos, one replica in a Cell is chosen as master which handles all read/write requests. If the master fails, a fail-over is performed.

Each replica has a local database, for files/directories/locks etc. Master writes directly to its own database, which gets asynchronously replicated for Fault Tolerance.

Clients use a Chubby Library to communicate with Servers using RPC.

Chubby interface is a unix-like file system based, a tree of files and directories(which other sub-directories but not files).

Locks: Each node(file/directory) can act as an advisory reader(shared)-writer(exclusive) lock.

Ephemeral Nodes to indicate others that a client is alive.

Metadata includes ACL, Monotonically increasing 64-bit numbers, and CheckSum.

Events mechanism between Chubby Client and server and Client and application for a variety of events like, Lock Acquired, file edited, Jeopardy, Safe etc.

Client Caching to reduce read traffic. Need consistency b/w File, Replica, and its client cache. Client cache invalidation using KeepAlive request/responses.

Clients maintain Sessions using KeepAlive RPCs.

Backup Snapshot of database(Write-Ahead Log) to a GFS file server to different buildings.

Mirroring:Collection of files synced from one cell to another. System Design Patterns:

Write-Ahead Log: For Fault Tolerance, to handle master crash, all database transactions stored in a transaction log**(on local drive or on a distributed GFS?)**

Quorum: To ensure strong consistency. Master gets write ack from N replicas before responding back to client about write success.

Generation Clock: Newly elected master uses Epoch number(monotonically increasing) to avoid split brain.

Lease: Chubby Client maintains a Time bound session lease with Master. References:

Chubby Paper

Chubby Architecture video

Chubby vs ZooKeeper

Hierarchical Chubby

BigTable

GFS

Jordan Deep Dive

Paper Link: https://static.googleusercontent.com/media/research.google.com/en//archive/chubby-osdi06.pdf

Last updated: March 15, 2026

Questions or discussion? Email me